Adapted from my conversation with Shane Hastie on the InfoQ Engineering Culture Podcast (July 2022).

When people ask me about engineering productivity, the first thing I do is push back on the word "productivity" itself. It's not that productivity doesn't matter, it's that the word has become a proxy for something much narrower than what actually drives great engineering. We don't want to measure engineering productivity. We want to have a holistic view. We want to make sure we're looking at engineers as people, and asking the right question at the end of every day: What got in your way today? And what were you genuinely excited about?

Those two questions tell you an enormous amount more than any throughput metric.

For nearly eight years I led engineering teams at Pluralsight - first as Senior Director and later as Head of Engineering - where we built Flow, a software delivery intelligence platform. Part of what made that work uniquely interesting was that we used our own product. We got to see ourselves clearly, which meant very little room to hide from our own blind spots. And what we found, over and over again, was that the teams delivering the best outcomes were not the ones with the most sophisticated processes. They were the ones where people genuinely liked working together.

The problem with the old playbook

There's a question I ask a lot of engineering leaders: "Are your teams set up for success?" It's a simple question, but it rarely gets a simple answer. What I hear most often is a version of the same thing: "We have an agile process. We have the right tools. So yes."

That answer tells me what I need to know. The process and the tools are the frame around the picture. They're not the picture itself.

So much of the advice circulating about engineering leadership was written for a different era - one where retention wasn't a crisis, where attrition didn't reshape a team every few quarters. When someone leaves, it puts a real burden on everyone who stays. It's not just about the work they took with them. It's about the energy and trust that erodes when a team has to keep rebuilding itself. You can't solve that with a better sprint ceremony.

What you need is to really focus on people. To take a much broader view of everything that makes teams healthy.

The business case is real, even if it rarely gets framed that way. Replacing a single mid-level engineer costs between 50% and 200% of annual salary when you account for recruiting, onboarding, and ramp time, and that number doesn't capture the institutional knowledge that walks out with them. A team that has rebuilt itself three times in two years isn't just slower. It's structurally incapable of sustained improvement, because improvement requires memory. When you're making the case upward for investing in team health, lead with this: developer satisfaction is one of the highest-return bets an engineering organization can make.

What a healthy team actually looks like

Developer satisfaction is the metric I keep coming back to. Not the survey score (though I do think surveys matter) but the actual condition of people's working lives. Are they able to make decisions close to the work? Do they understand the value of what they're producing? Do they feel empowered, or do they feel managed?

A healthy team is one where people genuinely like each other. That sounds soft, but it's not. When a team likes working together, they share information more freely, they catch each other's mistakes before those mistakes become incidents, they help each other grow. Code quality doesn't come from static analysis tools. At its core, it comes from people helping each other.

When things are already broken

Most articles on team health describe what good looks like. That's useful if you're building from scratch. It's much less useful if you inherited a team that's already burned out, already cynical, already convinced that another leadership initiative means another round of changes that will be forgotten in six months.

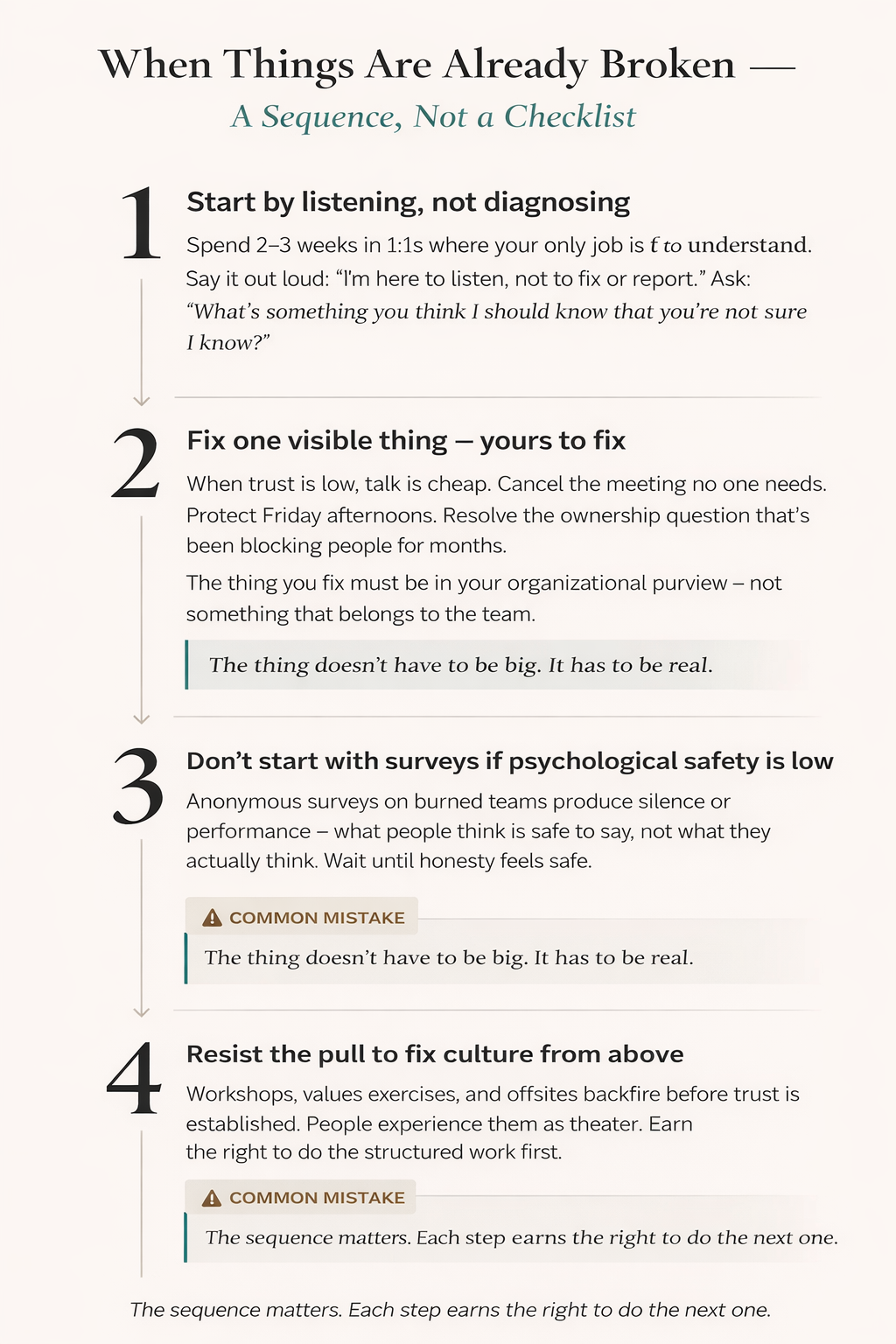

When things are already broken, the sequence matters. Here's what I've found works, and what doesn't.

Start by listening, not diagnosing. Before you run a survey or propose a new process, spend two to three weeks in one-on-ones where your only job is to understand what people are actually experiencing. Make it explicit at the start of each conversation that you're here to listen, not to fix, report, or evaluate. That framing matters because most engineers have been in 1:1s where honesty had consequences, and they've adjusted accordingly.

The questions you ask will determine what you hear. Vague questions get vague answers. A few that reliably open things up:

- "If you had a magic wand and could change one thing about how we work, what would it be?" The hypothetical framing removes the risk of it sounding like a complaint.

- "I'm still learning this team. What's something you think I should know that you're not sure I know?" This positions you as a student, not an authority - and gives people permission to surface things they've assumed leadership already knows and has chosen not to fix.

- "What's the most frustrating part of your week that probably doesn't show up in any meeting or status update?" This specifically gets at invisible friction, which is exactly what you're after.

- "If you were me, what would you do first?" When you ask for advice rather than information, it signals that you respect someone's judgment, not just their output. That distinction builds trust in a way that information-gathering questions generally don't.

Those questions open the door, but the follow-through is what matters. Most people give you the safe version first, the answer that's true but not quite the real thing. When that happens, don't move on. Respond with "tell me more" or "what else?" and then stay quiet. The second and third answers are usually where the honest signal lives. Silence is not awkward; it's an invitation. Most leaders fill it too quickly.

The goal isn't just to collect data. It's to establish that you'll hear hard things without immediately converting them into action items or forwarding them to the next level up.

Fix one visible thing quickly. When trust is low, talk is cheap. Find something that's genuinely frustrating the team like a broken tool, an unclear process, or a recurring meeting that wastes everyone's time, and fix it. You're not doing this to win points, but to demonstrate that what the team said matters. The thing you fix doesn't have to be big, but it has to be real. One important distinction: the thing you fix should be yours to fix - something in your organizational purview, not something that belongs to the team. If engineers are frustrated by a flaky test suite, the answer isn't to go fix the tests yourself. But if they're losing two hours a week to a status meeting that exists because someone upstream needed visibility three years ago, that's yours to cancel. Other examples: committing to protected focus time on Friday afternoons, resolving an unclear ownership boundary that's causing engineers to block on each other, or finally getting a decision made on a tool question that's been dragging for months. The common thread is that these are things only you have the standing to change, and that engineers experience as friction they can't remove themselves.

Don't start with surveys if psychological safety is already low. Anonymous surveys sound safe, but on teams where people have been burned before, they produce either silence or performance. You'll get data that tells you what people think is acceptable to say, not what they actually think. Save surveys for when you've built enough trust that people believe the results will be used honestly.

Resist the pull to fix culture top-down. The most common mistake I see senior leaders make when they inherit a dysfunctional team is launching a culture initiative - workshops, values exercises, team offsites. These aren't bad in themselves, but they backfire when done before trust is established. People experience them as theater. The sequence matters: earn the right to do the structured work by demonstrating first that you hear people and act on what you hear. Once that's in place, culture initiatives actually land.

Culture is something you build together, not install from above

This matters beyond broken teams. Even healthy organizations get it wrong by treating culture as something leadership designs and delivers. The programs that fail - values workshops, mandatory fun, recognition systems that feel performative - share a common flaw: they flow from the top down.

The things that actually shift culture are smaller and more organic. One practice I've seen work consistently is recognizing each other publicly and specifically. At Pluralsight, we did something called Gratitude Fridays: at the end of the week, anyone could call out a coworker who made their week better - not in a vague "great job" way, but in a specific, genuine way. I had a new engineer tell me he thought it was cheesy at first. Then someone recognized him for something real, and he understood immediately. That's how belonging actually forms.

What makes this different from the initiatives that feel creepy or surveillance-adjacent is the direction it flows. It's not leadership watching, it's peers seeing each other. The moment a well-meaning manager takes feedback from a check-in and turns it into a Zoom call that feels like monitoring, the honest responses disappear. Trust goes underground.

Creating trust means asking people what they want you to do with what they tell you. Sometimes people want you to fix something. Sometimes they just need to be heard. "What, if anything, would you like me to do about this?" is one of the most powerful questions I know how to ask as a leader. And sometimes the most valuable answer is: "Just listen."

I didn't always get this right. Early in my leadership career, I inherited a team that had experienced a lot of churn. I thought the right move was to be transparent and to share more context, to include people in decisions, to over-communicate. What I didn't account for was that the team had learned to treat management communication as a leading indicator of bad news. Every all-hands, every Slack update, and every one-on-one where I tried to be open created anxiety instead of relief. I was doing the right behavior with completely wrong timing. It took longer than I'd like to admit to realize that trust doesn't respond to information volume. It responds to consistency over time. You can't speed-run it.

Metrics that enable instead of punish

Engineering leaders are generally very comfortable using metrics to understand systems - uptime, latency, error rates. Where they get uncomfortable is using metrics to understand people. I think that discomfort usually comes from a fixation on single dimensions. We all know what happens when you optimize for one metric in isolation: you get improvement on that metric you focused on, and something else breaks. The bottleneck keeps moving.

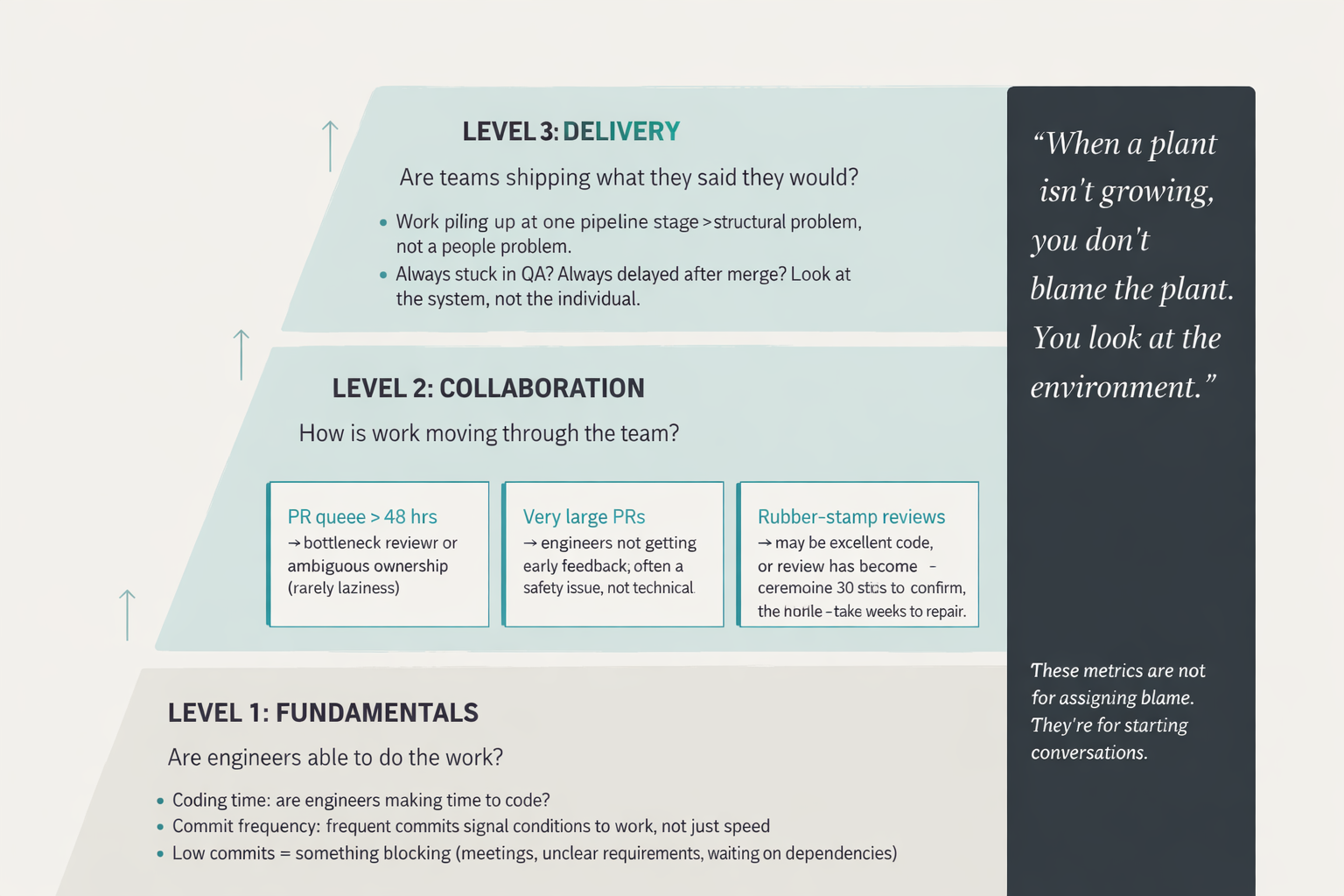

What actually works is a layered, holistic view. I think about it in three levels:

At the primary level are the fundamentals: are engineers making time to code? Are they committing frequently? Frequent commits aren't a measure of how fast someone works, but a signal of whether someone has the external conditions to get work done. If an engineer isn't committing, something is blocking them like meetings, unclear requirements, or waiting on dependencies. Use these fundamental metrics to have honest discussions about the work environment.

At the middle level is collaboration. This is where I think the most valuable engineering quality work actually happens: in pull requests, in review comments, in how quickly a piece of work moves through the system once it's ready for eyes. A few patterns I watch for and what they usually mean:

- PRs sitting in review for more than 48 hours usually signals one of two things: a bottleneck reviewer who's everyone's dependency, or ambiguous ownership where nobody feels responsible. It's rarely laziness.

- Very large PRs often mean engineers aren't getting feedback early enough, or that the work isn't being broken down into reviewable pieces. This is frequently a safety issue, not a technical one. People batch work when they're not confident that partial progress is welcome.

- Rubber-stamp reviews - approvals with no comments can mean the code is genuinely excellent. It can also mean review has become ceremonial. The distinction matters enormously. One takes 30 seconds to confirm; the other takes weeks to repair.

At the highest level is delivery: are teams shipping what they said they would? Where is work getting stuck? What friction is invisible to the people experiencing it? Work that consistently piles up at one stage of the pipeline - always stuck in QA, always delayed after merge - usually points to a structural problem, not a human performance problem.

There's a principle I come back to often when working with leaders who are new to people metrics: when a plant isn't growing, you don't blame the plant. You look at the environment. An engineer who isn't committing, isn't reviewing, isn't shipping - that's almost never a story about the engineer. It's a story about meeting load, unclear priorities, lack of feedback, insufficient support, or some combination of things that the leader has the power to change. The data surfaces the pattern. The leader's job is to ask what in the environment needs to change - whether that's clearing the calendar, providing more recognition, or investing in the training and support that lets someone do their best work.

The critical thing is what you do with these metrics. They are not for assigning blame or for performance management in the punitive sense. They're for starting conversations about things that intuition alone often misses. Bring a pattern to a one-on-one: "I noticed PRs have been sitting longer this month - tell me more about what's going on that might have changed." More often than not, the engineer already knows exactly why.

This challenge has sharpened considerably in the AI era. When teams adopt AI coding tools, the natural instinct is to start measuring AI. The most convenient proxy is acceptance rate or lines of code attributed to AI. Those metrics have the same problem as any other single-dimension measurement: they illuminate where you're looking, not where the keys actually are. I wrote more about this pattern in Your AI Metrics Are Lying to You.

Giving people access to their own data

One of the most powerful things I've seen - and something I advocate for with every customer we work with - is letting individual contributors see their own metrics.

I spoke with an engineer once who told me her previous company had used Pluralsight Flow, but only managers saw the data. She found out they were tracking things about her and thought: what else do they know? The opacity created suspicion. The metrics that were meant to help ended up feeling like surveillance.

When we flipped that model by giving everyone on the team access to their own data, the conversations that came out of it were extraordinary. We'd say simply: explore this, and come back with one thing you find interesting. One thing you didn't expect, or one thing you want to improve, individually or as a team. Every time we did this, something real emerged. Not because we prompted it correctly, but because people care about their own work. They're curious. Given the right information and the psychological safety to act on it, they will identify improvement opportunities that no leader would have spotted.

That's what ownership looks like. It's also what buy-in looks like. You don't manufacture either one. You enable them.

Belonging is not a special topic

I've been in the tech industry for almost thirty years. Back in the late '90s, I was often the only woman in the room - sometimes the only female engineer, sometimes the only woman in the entire organization. There were situations where I felt I didn't belong. Not because anyone said it explicitly, but in the accumulation of small moments where I felt like a guest instead of a member. When I eventually joined a team where that feeling was absent, it wasn't because belonging had been declared a priority. It was because inclusion was simply how the team operated.

When inclusion is a special topic, it becomes fragile. When it's just how your team thinks, it becomes durable.

Here's what that actually looks like in practice. These are observable signals - things you can look for in any meeting, design review, or planning session:

- Who gets interrupted, and who does the interrupting. If the same people are consistently talked over and the same people are doing the talking over, that pattern is your culture.

- Whose ideas get credited. When someone's suggestion resurfaces twenty minutes later attributed to someone else, notice whether the original speaker was invited back into the conversation.

- Who defaults to silence. In a planning session, the people who say the least aren't always the least engaged. Sometimes they've learned that speaking up doesn't change outcomes.

- What the "optional" activities are, and who attends them. If your team's social events are consistently structured around one demographic's preferences, optionality isn't evenly distributed.

- How new members describe their first three months. Ask a new team member, three months in, whether they feel comfortable saying "I don't know" and whether they know who to ask for what. The answer tells you whether belonging is real or just assumed.

None of these require a survey or a workshop. They require paying attention, consistently, as a habit rather than an initiative.

The transition into leadership is harder than it looks

One of the most consistent failures I see in engineering organizations is the assumption that the best engineer will naturally become the best engineering leader. The logic is understandable: they know the work, they've earned the trust of the team, why wouldn't they lead?

But the skills are genuinely different. Writing great code and enabling other people to write great code are not the same thing. What made you effective as an individual contributor - deep focus, direct ownership of a problem, the satisfaction of finishing something yourself - can actively work against you as a leader.

We've had leaders in our organization who transitioned back to individual contributors. We made that okay. We made it explicit that it was okay, because if we hadn't, they would have left the organization entirely to keep doing the work they loved. Letting go of code is hard, especially when coding is what drew you to this field. But the question I try to help new leaders sit with is: what's the most impactful thing you can do? Grabbing tickets and becoming a bottleneck? Or helping five engineers each become measurably better?

The answer doesn't mean you're done with technical work. You can still pair with engineers, still engage on architecture, still keep your fingernails dirty in the code. The shift is in where your primary leverage comes from.

Retention starts with actually valuing people

I was once interviewing for a leadership role at a small company. The owner made an offhanded remark that engineers were "a dime a dozen."

I had a strong reaction to that. I sat with it afterward, wondering whether I was being oversensitive. Was it because I thought engineers should be treated as special? Was it the memory of how hard it had been to hire great people?

I don't think it was either of those things. I think what bothered me was simpler: you can't build a successful company at any level if you don't genuinely value the people in it. And people can tell when they're not valued. They don't announce it; they just leave. Or they stay and give less.

Putting people first isn't a soft aspiration. It's a precondition. Not people as resources. People as human beings with careers and ambitions and hard days and reasons to care about their work. When they know they're cared for, they bring their best. When they don't, they bring what they have to.

When someone decides to leave, leaders set the tone for how the rest of the team experiences it. If leadership handles the departure with grace - celebrating the opportunity the person is moving toward, being honest about the transition - then the team doesn't panic. They see that it's okay. That the organization is stable. That there's no performance review hidden behind the goodbye.

One of the pieces of feedback that has meant the most to me in my career is that I can be a calming force during change. I had an engineer tell me he had been seriously considering leaving, close to a year before we had the conversation where he told me that. He stayed because of a discussion we had. I don't know exactly what I said. But I know that the goal of the conversation wasn't to convince him to stay - it was to genuinely understand what would make work feel worth it. Sometimes that's enough.

These lessons don't expire in the age of AI

If anything, the principles from that 2022 conversation feel more urgent now, not less.

The rise of AI-assisted development has introduced a new category of risk to engineering team health - one that doesn't show up in traditional delivery metrics. Teams can be shipping faster than ever while understanding their own systems more slowly. Decision rationale goes uncaptured. I've been calling this cognitive drain: engineers describe enjoying the speed of working with AI coding agents, but after months of it, feeling like they don't fully know their own codebase anymore. The code works. Nobody's sure why quite the same way they used to be.

What I've observed is that the teams navigating this best are, predictably, the ones with the strongest psychological safety and the most transparent relationship with their own data. When engineers have access to their own metrics and feel safe raising concerns, they self-identify the problem faster. One team I spoke with realized - through their own data review, not management intervention - that their AI-assisted PRs were getting reviewed significantly faster but also generating more production incidents. That kind of insight only surfaces when engineers feel safe admitting it and are curious enough to look. On teams without that foundation, the same pattern goes undetected until something breaks badly.

Conversely, teams where trust is low tend to adopt AI tools in ways that make the understanding problem worse. Engineers use AI to move faster and hide the fact that they don't fully understand the output - because admitting confusion feels risky. The tools become cover rather than force multipliers. This is exactly the kind of dynamic that doesn't appear in acceptance rate metrics or lines-of-code attributions.

You can't address cognitive drain on a team that doesn't trust its leaders. You can't implement decision-capture practices on a team that's too burned out to slow down. The foundations come first. I've explored what this looks like in practice starting with Cognitive Drain: The Silent Risk of AI-Assisted Development.

Engineering success isn't a puzzle missing one piece. It's a system - teams, culture, measurement, leadership, and above all, people who feel genuinely valued for the work they do and the humans they are. Get those things right, and the output takes care of itself.